- in United States

- with readers working within the Retail & Leisure and Telecomms industries

- within Corporate/Commercial Law and Real Estate and Construction topic(s)

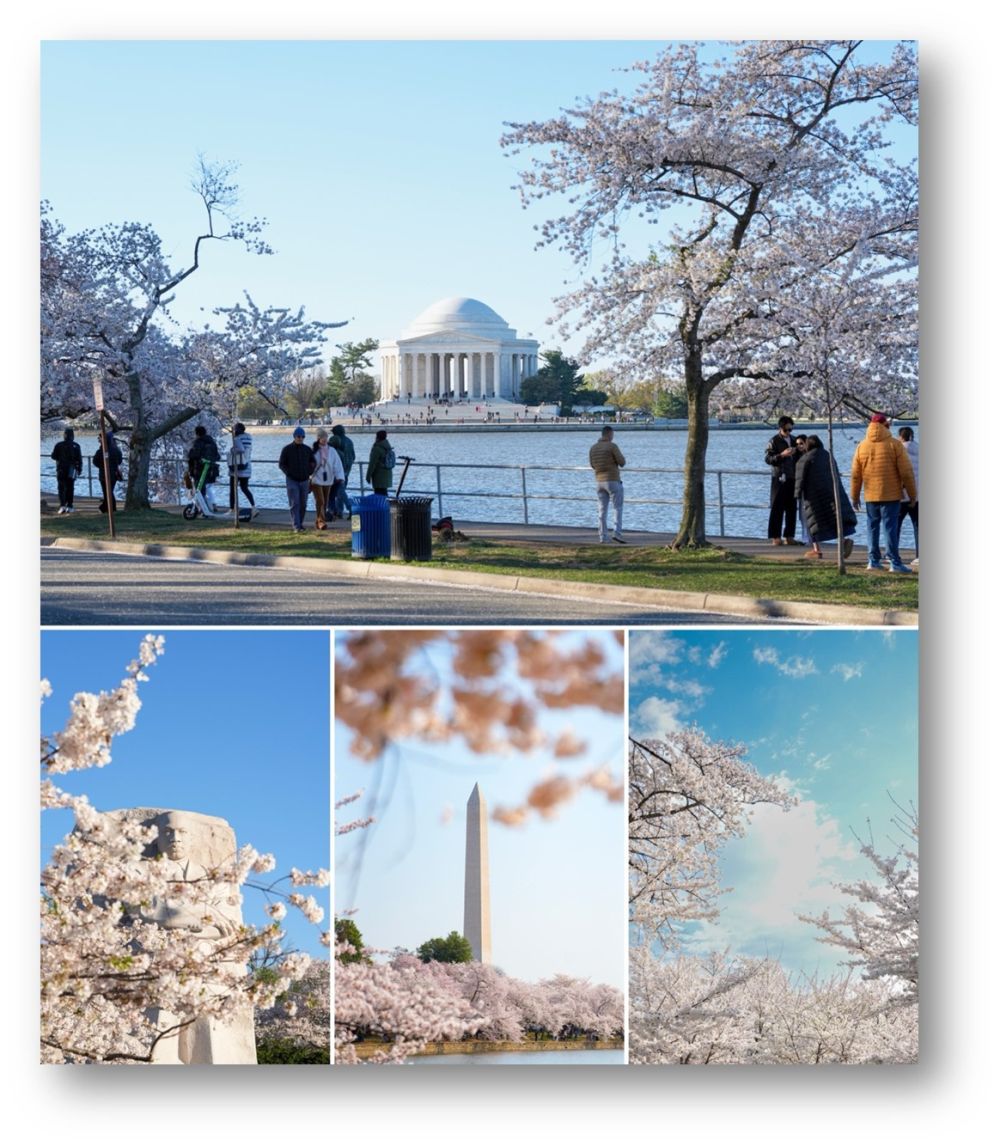

Spring in Washington, DC is one of my favorite times of year. The cherry blossoms, the energy, the sense that something new is always around the corner. It never gets old.

The March 2026 edition is arriving a little later than usual, and I appreciate your patience. I spent the early part of this week at the IAPP Global Privacy Summit, and it did not disappoint. The conversations happening there reflect just how much is moving in our space right now. AI governance, privacy enforcement, and agencies actively seeking input on consumer protection.

I am also in the middle of a whirlwind college tour with my youngest son. We have just wrapped up visits in the Northeast and are now making our way through the Midwest. It is the kind of trip that reminds you how fast time moves and how much is happening all at once, both in life and in the news.

That is exactly why we built this newsletter. When you are on the road, in back-to-back meetings, or just trying to keep up, ICYMI is designed to make things a little easier. The goal has always been to track the key issues in a digestible way, something you can get through during a commute, a coffee break, or in between calls.

That same philosophy guides my practice. I am genuinely as enthusiastic about what my clients are building as if it were my own company or project. The trust they place in me is something I do not take for granted, and every day I focus on helping lay the foundations that allow their businesses to grow and succeed.

This month’s Of Note feature centers on AI governance, specifically the importance of keeping your governance program alive and evolving. So much energy has gone into helping companies stand up governance frameworks, and that work matters. But governance is not a one-and-done exercise. I had the pleasure of attending a compelling presentation on exactly this topic, and I think it will resonate with many of you.

As always, if something in this edition sparks a thought, a question, or a reaction, I want to hear it. Send me a note. And if we have not connected on LinkedIn yet, I would love to change that.

With gratitude,

P.S.- Here are some pictures of the cherry blossoms taken by my oldest son last week for his photography class:

Artificial Intelligence (AI)

The regulatory and enforcement landscape for artificial intelligence is moving on multiple tracks simultaneously. Federal preemption proposals, state AI Companion laws, and Federal Trade Commission (FTC) enforcement actions are all advancing in parallel, and companies cannot afford to wait for a unified framework before building compliance programs and, as shared below in Of Note, those with programs should make sure they are regularly checking for drifts on AI systems or unexpected changes to vendor AI systems.

- Federal preemption pressure is real but not yet law. Maintain state-specific AI compliance programs while watching Congressional action, because the DOJ AI Litigation Task Force signals the administration is prepared to challenge state AI statutes.

- The West Coast AI companion compliance standard is now law in Oregon and Washington effective January 1, 2027, with BIPA-scale $1,000 per-violation statutory damages and private rights of action. Any platform using generative AI or emotion-recognition to simulate sustained relationships should treat this as an immediate compliance deadline, not a future watch item.

- The FTC’s Air AI settlement and the JC Penney BIPA Lawsuit together confirm that the FTC and plaintiffs’ bar are applying existing consumer protection and biometric privacy frameworks to AI products without waiting for new AI-specific legislation. Income claims in AI marketing and facial scanning in consumer-facing tools are both enforcement targets now.

Federal

White House Releases National Policy Framework for AI, Calls on Congress to Preempt State Laws. On March 20, 2026, the White House released A National Policy Framework for Artificial Intelligence, a legislative blueprint organized around seven key concepts including protecting children, safeguarding communities, respecting intellectual property, preventing censorship, enabling innovation, focusing on workforce development, and establishing federal preemption of state AI laws. The framework explicitly calls on Congress to preempt state laws that regulate AI development, characterizing it as an inherently interstate phenomenon with key foreign policy and national security implications, while allowing states to retain authority over child safety, fraud, consumer protection, zoning, and their own government procurement of AI. The framework signals the administration’s strong preference for federal uniformity. The framework also highlights the Department of Justice (DOJ) AI Litigation Task Force as the mechanism through which the administration may challenge onerous state AI statutes. Companies currently building compliance programs around state-specific AI laws should continue doing so while watching Congressional action closely, as federal preemption legislation could significantly reshape the compliance landscape.

Data Center Community Impact Act to Study AI Data Center Expansion. Representative Bonnie Watson Coleman (NJ-12), joined by eight House cosponsors, introduced the Data Center Community Impact Act. The legislation would authorize a federal study on the environmental, economic, and public health impacts of AI data center expansion, with a focus on communities of color and low-income communities. The bill arrives as data center construction accelerates nationwide to meet surging AI infrastructure demand, with advocates pointing to real-world consequences including increasing pollution, massive water usage, and greater dirty energy consumption in host communities. The legislation is endorsed by the Natural Resources Defense Council, Climate Justice Alliance, GreenLatinos, Food & Water Watch, and Climate Revolution Action Network, signaling that AI infrastructure is increasingly being drawn into environmental justice debates.

Sanders and Ocasio-Cortez Introduce AI Data Center Moratorium Act. Senator Bernie Sanders (I-Vt.) and House Representative Alexandria Ocasio-Cortez (D-N.Y.) introduced the Artificial Intelligence Data Center Moratorium Act (S. 4214). The legislation proposes that Congress place a moratorium on new and upgraded data centers used for the development or operation of artificial intelligence models that exceed certain electricity loads. The moratorium would only lift if Congress enacts legislation requiring a pre-release federal review of AI products to ensure they do not threaten health, civil rights, or the future of humanity, enacting protections to ensure the promised economic gains of AI and robotics benefit workers rather than Big Tech owners, and implements guardrails ensuring AI does not increase electricity prices, harm communities, or destroy the environment. The bill would also ban U.S. exports of AI computing infrastructure to countries that do not have safeguards in place to guarantee AI is safe and effective, workers are protected, and AI does not harm the environment. The legislation responds to rising electricity costs, nearly 7% last year, with an average household paying $123 more in 2025. The Moratorium is unlikely to advance; however, businesses should continue to monitor similar state initiatives.

Research and Oversight of Artificial Intelligence in Courts Act. Senators Roger Wicker (R-Miss.) and Peter Welch (D-Vt.), joined by Representative Harriet Hageman (R-Wyo.), introduced the Research and Oversight of Artificial Intelligence in Courts Act of 2026, bipartisan, bicameral legislation that would establish a 15-member task force to study the use of AI-powered speech-to-text and automatic speech recognition tools in federal courts, with a focus on privacy, civil liberties, and accuracy, and require the panel to report its findings to Congress and the Attorney General within 18 months. The bill arrives as federal courts have already begun deploying these tools without clear governing standards and its implications extend well beyond court reporting. If AI-generated transcripts become the official record of federal proceedings, questions of accuracy, authentication, chain of custody, and due process will directly affect how litigation is conducted, how appeals are built, and how regulatory enforcement records are created.

National Science Foundation (NSF) AI Education Act. U.S. Senators Jerry Moran (R-Kan.) and Maria Cantwell (D-Wash.) introduced the bipartisan NSF AI Education Act, legislation that if passed would direct the National Science Foundation to expand AI scholarship and professional development opportunities with a particular focus on agriculture, education, and advanced manufacturing. The bill authorizes undergraduate and graduate scholarships, creates at least five Centers of AI Excellence at community colleges and vocational schools, establishes a USDA-NSF grant program for AI research through land-grant universities, and sets an NSF Grand Challenge goal of training one million U.S. workers on AI by 2030. The legislation reflects growing bipartisan consensus that domestic AI workforce development is a national competitiveness priority and signals continued Congressional interest in sector-specific AI education pipelines beyond traditional computer science programs.

FTC Bans AI Voice Platform Air AI and Its Owners from Marketing Business Opportunities. The Federal Trade Commission (FTC) settled its August 2025 lawsuit against Air AI, an AI voice calling platform marketed to entrepreneurs and small businesses, with a proposed order that permanently bans the company and its three individual owners from selling or marketing any business opportunity, making earnings claims without adequate substantiation, and violating the Telemarketing Sales Rule. The FTC’s complaint alleged that since at least February 2023, Air AI falsely claimed that purchasers would or were likely to make substantial earnings, falsely represented that purchasers of the Air AI Access Card were protected by a refund or buy-back guarantee, and failed to provide required disclosure documents and earnings claims statements in violation of the Business Opportunity Rule. The proposed order includes a monetary judgment of $18 million, suspended based on inability to pay, with operators required to pay $50,000 for consumer relief. The case is a direct signal for the AI voice agent and AI SaaS sales space. The FTC will apply the Business Opportunity Rule and Telemarketing Sales Rule to AI platform vendors using income or performance claims in marketing to business purchasers, meaning any AI company using testimonials, revenue projections, or ROI claims in its marketing faces the same enforcement exposure as a traditional franchise or business opportunity seller.

State

California Governor Newsom Issues Executive Order Requiring AI Safety and Privacy Safeguards for State Contractors. Governor Gavin Newsom issued Executive Order N-5-26 on March 30, 2026, directing the California Government Operations Agency to develop, within 120 days, new state contracting processes and vendor certifications that require AI companies seeking to do business with California to attest to and explain their policies and safeguards to protect the public from misuse of their technology. Companies must demonstrate safeguards against the distribution of illegal content including child sexual abuse material, harmful bias, and violations of civil rights and civil liberties including free speech, voting, human autonomy, and protections against unlawful discrimination, detention, and surveillance. The order also requires state agencies to watermark AI-generated images and videos to prevent misinformation, and, in a pointed response to the Pentagon’s designation of Anthropic as a supply chain risk, directs California to conduct its own independent assessment of any company designated by the federal government as a supply chain risk. California may allow the company to remain a state contractor if the state does not find it to be a risk, creating a direct California procurement pathway independent of federal designations. For AI SaaS, connected vehicle, and technology companies with California state contracts or contracting aspirations, the 120-day clock for new vendor certifications is an immediate compliance planning prompt and the order’s suspension and ineligibility provisions for vendors where courts find unlawful privacy or civil liberties harm represent meaningful new contract-level risk.

Connecticut AG Issues AI Enforcement Memorandum, Putting Businesses on Notice That Existing Laws Already Apply. On February 25, 2026, Connecticut AG William Tong released a memorandum explaining how existing Connecticut laws may apply to artificial intelligence systems used in activities such as tenant screening, employment decisions, credit risk and loan determinations, insurance claims, and targeted consumer advertising. The AG specifically cited Connecticut’s civil rights laws (which apply to algorithmic decision-making in employment, housing, insurance, and lending), its Data Privacy Act (covering consumer access, deletion, correction, and opt-out rights), its Safeguards Law and Breach Notification Law (data security and breach reporting), and Connecticut’s Unfair Trade Practices Act and Antitrust Act (deceptive practices and anticompetitive AI conduct). The memorandum also notes that federal anti-discrimination statutes including the Equal Credit Opportunity Act, which requires adverse action notices when credit decisions are made using algorithmic models, may apply to AI-based decision tools. Although expressly non-binding, the memo functions as an enforcement roadmap and signals the AG is prepared to move on AI conduct without waiting for new legislation.

New York Bill Would Bar AI Chatbots from Practicing Law or Medicine and Give Users a Private Right of Action. New York Senate Bill S7263 advanced out of the Internet and Technology Committee on a 6-0 vote as part of a broader AI chatbot regulatory package, carrying significant implications for any company deploying AI tools that touch legal or medical subject matter. The bill prohibits AI chatbots from impersonating licensed professionals and bars them from providing substantive responses or advice that would constitute the unauthorized practice of law or medicine, while also mandating clear and conspicuous disclosure to users that they are interacting with an AI system, with that notice explicitly not serving as a liability shield for chatbot owners. The bill’s most consequential feature for litigation exposure is its private right of action, which would allow users to sue chatbot owners directly for damages and attorney’s fees.

Minnesota Lawmakers Move to Ban AI-Driven Surveillance Pricing at Grocery Stores and Beyond. Minnesota lawmakers have introduced two bills to prohibit AI-powered surveillance pricing with one targeting grocery stores and the other covering all other retail businesses after a news station’s testing confirmed that two shoppers using different apps at the same Cub Foods store paid different prices for the same items. Surveillance pricing uses AI to figure out how much a given customer is willing to pay, resulting in different prices for different people based on various metrics set by the retailer. The Minnesota legislation follows the same week’s introduction of similar legislation in Wisconsin, signaling that AI-driven dynamic pricing is rapidly becoming a focal point for state consumer protection legislators across the country.

New Jersey Bill Would Ban AI-Altered Real Estate Listing Photos and Require Disclosure of Virtual Staging. The New Jersey Assembly Science, Innovation and Technology Committee has advanced Assembly Bill 4728, legislation that would prohibit sellers, landlords, and their agents from using generative AI or photo editing software to fundamentally alter images in real estate advertisements requiring all listing photos to reflect the actual, current state of the property and be no older than five years. AI-generated virtual staging that adds furniture or non-fixed items to an otherwise accurate image remains allowed, but only with mandatory disclosure in every advertisement and an obligation to provide original unedited images to any prospective buyer upon request. Violations carry civil penalties of $500 for a first offense and up to $1,000 for each subsequent violation. For PropTech, real estate platform, and MLS clients, this bill is a direct signal that AI-generated listing imagery is moving into the regulatory crosshairs at the state level.

New Jersey Assembly Advances Three AI Bills Targeting Consumer Disclosure, Licensed Professions, and AI Companions. The New Jersey Assembly Science, Innovation and Technology Committee advanced three AI-focused bills in the same session, reflecting a coordinated state-level effort to establish AI transparency and consumer protection guardrails ahead of any federal framework. Assembly Bill 4730 would require any person or entity deploying generative AI to interact with consumers in a manner that could cause a reasonable person to believe they are communicating with a human to provide clear and conspicuous verbal or written notice at the outset of the interaction and prohibit advertising that their AI is capable of practicing any regulated professions, including law, medicine, and engineering, with violations carrying penalties up to $10,000 for a first offense and $20,000 for subsequent violations, plus treble damages. The companion Assembly Bill 4371 requires rules to govern AI’s use for these regulated professions. Assembly Bill 4732 would impose ongoing disclosure obligations on operators of AI companion platforms, requiring notification that the user is not interacting with a human not only at the beginning of every interaction but also at least every three hours throughout any continued session, with each violation carrying a civil penalty of $15,000. For clients deploying AI in customer-facing applications, the collective scope of these bills underscores that a single disclosure that the user is interacting with AI is not sufficient, and companies should ensure advertising does not represent that the AI product can serve as a substitute for a regulated “human” professional. The debate about how AI can be used for legal, medical, and other questions that are typically directed to regulated professionals continues in New Jersey, with regulators calling for careful rules around how GenAI products can respond to such questions.

Maryland Proposes Sweeping AI Toy Safety Act as the Consumer Product Safety Commission Declines to Act. Maryland legislators introduced the bipartisan Artificial Intelligence Toy Safety Act, a comprehensive regulatory framework that would apply to any device using machine learning, conversational AI, or behavioral modeling marketed to or primarily used by children. The bill would require pre-market child safety assessments, limit data collection to minimum necessary functionality, mandate 48-hour breach notification to parents, prohibit selling or transferring child data to third parties or using it to train unrelated AI models, ban content that is sexual, violent, or emotionally manipulative, and prohibit manufacturers from marketing AI toys as emotional companions or parental substitutes, with violations carrying civil penalties of up to $50,000 per violation and mandatory recalls. The bill arrives in direct contrast to a February 13 letter from the CPSC, in which Acting Chairman Peter Feldman told Senators Klobuchar, Cantwell, and Markey that the agency is neither equipped nor authorized to address non-physical harms such as psychological or emotional injury to children, leaving the regulatory field open for states to fill.

Oregon Passes AI Companion Safety Act with $1,000 Per-Violation Statutory Damages, Creating BIPA-Scale Litigation Risk. Oregon’s SB 1546 passed the Senate 26-1 and House 52-0 and awaits Governor Kotek’s signature. The bill regulates operators of AI companion, broadly defined as AI systems that use generative AI or emotion-recognition algorithms to simulate sustained, human-like platonic, intimate, or romantic relationships, requiring disclosure that users are interacting with AI, evidence-based protocols for detecting suicidal ideation, and mandatory mid-conversation interruption to deliver crisis referrals when distress is detected. For minors, operators must provide hourly reminders, prevent sexually explicit content, and avoid manipulative engagement techniques. The bill creates a private right of action with $1,000 statutory damages per violation, which is similar to Illinois’s BIPA statutory damages for private right of action claims, which produced billions in settlements. The broad definition could capture healthcare chatbots, AI tutoring systems, customer engagement platforms, and retail portals that follow up on previous interactions.

Pennsylvania Senate Passes SAFECHAT Act 49-1, Sending Nation’s First Comprehensive AI Chatbot Safety Bill to the House. The Pennsylvania Senate passed the Safeguarding Adolescents from Exploitative Chatbots and Harmful AI Technology Act, the SAFECHAT Act, by a 49-1 vote, marking the state’s first bill to comprehensively regulate AI chatbots, and it now heads to the Democrat-controlled House for consideration. The bill applies specifically to companionship-focused chatbots and requires operators to disclose the AI’s nonhuman status, issue periodic break reminders every three hours, prevent chatbots from generating sexually explicit content or material encouraging self-harm with minors, and direct users to crisis resources whenever high-risk language is detected. Notably, Governor Shapiro personally tested a chatbot that falsely claimed to be a licensed mental health professional in Pennsylvania, prompting him to request the Secretary of State open an investigation into whether chatbots are violating the state’s licensing laws.

Virginia Sends AI Bill to Governor Spanberger on AI Fraud Reporting, Employment Protections, and AI Systems Transparency. Three AI-related bills reached Governor Spanberger’s desk on March 14, 2026, with an action deadline of April 13, 2026. HB 580 expands the Division of Consumer Counsel within the Virginia AG’s Office to establish programs addressing AI fraud and abuse, including a statewide AI fraud reporting system, and public education initiatives targeting impersonation scams and voice cloning fraud. For companies whose AI products could be used for impersonation or consumer-facing deception, HB 580’s fraud reporting infrastructure will increase AG enforcement visibility.

Washington Governor Signs AI Companion Safety and Content Provenance Bills into Law. Governor Bob Ferguson signed both HB 2225 and HB 1170 into law on March 24, 2026. HB 2225 requires operators of AI companion chatbots to give users clear notice that the chatbot is not human at the start of every session and at least once every three hours (hourly for minors), bars chatbots from claiming to be human, forbids sexually explicit conversations with minors, bans manipulative engagement tactics designed to build emotional dependency, and mandates protocols for detecting suicidal ideation and crisis referrals, with a private right of action modeled on Washington’s My Health My Data Act. HB 1170 requires large AI providers with more than one million monthly users to offer a free AI-detection tool and to support provenance disclosures including watermarks or embedded metadata in AI-modified content. The Washington Attorney General may enforce the law after offering a cure opportunity before civil actions continue. For AI SaaS, voice platform, and companion app clients, Washington’s signing, the same week Oregon’s SB 1546 passed, confirms that the West Coast AI companion compliance standard is now law in two states, with January 1, 2027, as the operative deadline.

Washington Governor Signs Deepfake Protection Law, Raising Civil Penalties to $3,000 Per Violation. Governor Bob Ferguson signed SB 5886 on March 16, 2026, updating Washington’s existing personality rights statute to address AI-generated deepfakes. The law expands protected personal attributes to include a person’s forged digital likeness, defined as a visual or audio representation digitally created or altered to be indistinguishable from a genuine representation of an identifiable individual, and increases civil penalties from $1,500 to $3,000 per violation, plus actual damages, profits, and injunctive relief. Protections extend to both living and deceased individuals and take effect June 11, 2026. Any company that creates, distributes, or hosts AI-generated content depicting real people, including marketing firms, media companies, and platforms hosting user-generated deepfakes, should implement consent protocols and update content moderation policies before the effective date.

Wisconsin Legislation Targets AI-Driven Dynamic Pricing at Retail. Wisconsin State Senator Kelda Roys (D-Madison) introduced legislation that would prohibit retailers both in-store and online from using AI-driven dynamic pricing to charge consumers different prices based on personal data such as age, race, salary, credit score, or purchase history. The bill targets the growing practice of retailers accessing intimate consumer details and feeding that data into AI algorithms designed to extract the highest possible price from each individual buyer. The proposal reflects a broader and accelerating trend of state-level consumer protection legislation targeting AI-enabled surveillance pricing, arriving at a moment when grocery costs and inflation remain top-of-mind for consumers and policymakers alike.

Litigation

Generative AI Use Without Counsel Does Not Trigger Privilege. In United States v. Heppner, The U.S. District Court for the Southern District of New York denied both attorney-client privilege and work product protection for legal strategy materials that a financial services executive generated using a generative AI tool without any direction from or involvement of counsel. The court reasoned that because the platform’s terms of service permitted the collection, retention, and potential disclosure of user inputs and outputs, no reasonable expectation of confidentiality existed, and without confidentiality, the foundational element of privilege collapses. The decision reinforces longstanding doctrine. Entering a prompt into a commercial AI platform for legal analysis, absent counsel’s direction, is little different from running a search engine query and will not attract privilege protection. Organizations should audit which AI tools employees are using, evaluate the governing data-handling terms, and set up guardrails prohibiting use of generative AI for legal strategy outside counsel involvement.

Work Product Protection Upheld for Pro Se Litigant’s AI-Assisted Analysis. The U.S. District Court for the Eastern District of Michigan held in Warner v. Gilbarco that AI-assisted litigation analysis prepared by a pro se plaintiff after the close of discovery was protected work product, and that sharing the materials with a public AI platform did not constitute waiver. Because the plaintiff was effectively serving as his own attorney, the court found the materials reflected his own mental impressions prepared in anticipation of litigation, satisfying the core work product standard. Critically, the court characterized the AI platform as a tool, not a person, concluding that disclosure to the tool did not meaningfully increase the likelihood that the materials would reach an adversary. Read alongside Heppner, the decision confirms that existing privilege doctrine applies to AI-generated materials without modification, while highlighting that the outcome turns heavily on who directed the AI use and under what confidentiality conditions.

JCPenney Sued Under BIPA for AI Skin-Care Analysis Tool That Scans Visitors’ Faces Without Notice or Consent. A March 2026 lawsuit filed in Illinois state court alleges that JCPenney uses an AI-powered skin-care analysis tool on its website to scan visitors’ faces and provide personalized cosmetics advice without ever informing them that the technology captures and stores their biometric information, in violation of Illinois’ Biometric Information Privacy Act. The complaint alleges that the AI tool captures, analyzes, and keeps facial geometry from website visitors without the written notice, stated purpose, or affirmative consent that BIPA requires before any private entity collects biometric identifiers, and seeks injunctive relief compelling BIPA compliance plus unspecified statutory damages. The case parallels the Neutrogena Skin360 litigation, in which the court rejected J&J’s healthcare exemption defense and held that AI facial scanning for cosmetics recommendations is a marketing and sales strategy, not medical care, establishing that the beauty tech sector is squarely within BIPA’s reach regardless of the wellness framing of the product. For any company deploying AI-powered virtual try-on, skin analysis, facial recognition, or personalized recommendation tools that process facial geometry of website or app visitors, including retailers, beauty brands, and the AI SaaS platforms powering those features, this case is a direct compliance prompt. BIPA’s written notice, consent, and data retention policy requirements apply to AI facial scanning tools regardless of where the business is headquartered, because the statute reaches any company collecting biometric data from Illinois residents.

Privacy

Privacy enforcement is no longer a future risk to be managed through policy documents alone. California regulators are issuing seven-figure fines, a twentieth state has enacted comprehensive privacy legislation, and a major class action is testing whether the gap between privacy marketing claims and actual data practices can sustain multi-count federal litigation. The message across all three developments is the same and programs must match practice.

- The CPPA’s enforcement actions against PlayOn Sports and Ford for violations of the CCPA. Conditioning service access on tracking consent is a violation and redirecting users to NAI/DAA industry opt-out frameworks is not a lawful substitute for a CCPA-compliant opt-out mechanism. Connected vehicle, EdTech, and event ticketing clients are directly in the CPPA’s enforcement crosshairs.

- With Oklahoma becoming the 20th state to enact comprehensive privacy legislation and Rep. Lofgren’s federal baseline bill reintroduced, investment in scalable multi-state privacy program architecture is a business necessity now. Companies should audit whether existing programs, opt-out mechanisms, and data processing agreements extend to Oklahoma consumers by January 1, 2027.

- The Shirazi v. Meta WhatsApp class action is an example of a new trend to bring claims against companies with public privacy marketing claims that fail to match actual internal data access practices. In this case, the Plaintiff filed a multi-count complaint in federal court seeking treble damages and disgorgement. Any company making encryption or data minimization representations in marketing should audit actual access practices against those promises.

Federal

Rep. Lofgren Reintroduces Online Privacy Act to Establish National Data Baseline. Representative Zoe Lofgren (CA-18) reintroduced the Online Privacy Act on March 20, legislation that would set a national baseline for how companies collect, use, and share Americans’ personal data. The bill’s reintroduction arrives as the patchwork of state comprehensive privacy laws continues to grow, with Oklahoma becoming the twentieth state to enact a comprehensive privacy law in March, ending a nearly two-year national legislative drought, and signals continued Democratic appetite for a federal framework that would operate alongside, rather than preempt, stronger state protections. For companies operating across multiple states, the trajectory toward either a federal floor or an increasingly fragmented state-by-state regime makes investment in scalable privacy program architecture a business necessity now rather than a future consideration.

Senators Markey, Wyden, and Merkley Send Letter to Meta Inquiring about Meta’s Plans for Facial Recognition for Smart Glasses and Express Concerns with Mass Surveillance Normalization. Senators Edward Markey (D-Mass.), Ron Wyden (D-Ore.), and Jeff Merkley (D-Ore.) wrote to Meta CEO Mark Zuckerberg on March 17 demanding transparency on the company’s plans to integrate facial recognition into its smart glasses, warning that the glasses, often indistinguishable from regular eyewear, could be worn throughout the day allowing a user to scan thousands of faces with no practical way for a bystander to consent or even know that real-time identification is taking place. The senators cited an internal Meta memo revealing the company intends to release the technology and hopes that the current chaotic political environment will help avoid public scrutiny, a pointed accusation that Meta was deliberately timing a privacy-invasive product launch to minimize regulatory attention. The senators told Zuckerberg that Americans do not consent to biometric data collection simply by walking down a public street, entering a café, or standing in a crowd, and demanded answers by April 6, 2026 on how Meta would obtain affirmative consent, handle biometric data deletion, test for bias, prevent misuse, and whether it plans to allow users to build personalized facial recognition databases of known individuals. The letter coincides with a March 8, 2026, class-action suit filed against Meta alleging that Meta and Luxottica advertising claims about their AI smart glasses as “designed for privacy” are false and deceptive in light of the company’s practices of allegedly secretly storing and analyzing video recordings taken inside users’ homes.

FTC Issues COPPA Policy Enforcement Statement and Signals Forthcoming Rule Review. The Federal Trade Commission issued a policy enforcement statement clarifying how it will enforce the Children’s Online Privacy Protection Act, signaling a forthcoming rule review that could reshape compliance obligations for operators of apps, websites, and connected devices that collect data from children under 13. The statement arrives in the same month that Congress advanced the App Store Accountability Act out of the House Energy and Commerce Committee on a 26-23 vote, adding platform-level age verification to the legislative agenda alongside the chatbot safety bills advancing in Pennsylvania and New Jersey. For clients operating in the children’s app, EdTech, connected toy, or AI companion space, the combination of FTC enforcement signals and active Congressional movement on children’s digital safety means that COPPA compliance review and proactive data minimization practices should be treated as immediate priorities.

HHS and State AGs Fine Ambulance Company Over $500,000 for Data Breach, Require Enhanced Security. The Attorneys General of Massachusetts and Connecticut, in coordination with the U.S. Department of Health and Human Services, entered into settlement agreements with Comstar, LLC, an ambulance billing company, imposing fines exceeding $500,000 and requiring enhanced security protocols, privacy program improvements, and data minimization practices following a data breach. The multiagency, multistate resolution reflects the now-established enforcement pattern in which health data breaches trigger simultaneous federal HIPAA enforcement and state AG consumer protection action, compressing the response timeline and amplifying financial exposure beyond what either regulator would impose alone. Companies handling health-adjacent data, including telehealth platforms, connected device operators, and medical billing intermediaries, should treat this settlement as a reminder that breach notification alone is insufficient and that documented security and data minimization programs are prerequisites to managing enforcement outcomes.

State

California Privacy Protection Agency (CPPA) Fines PlayOn Sports $1.1 Million for Tracking Students Without Lawful Opt-Out. The CPPA issued a decision requiring PlayOn Sports, whose GoFan platform serves as the official ticketing platform for the California Interscholastic Federation and contracts with approximately 1,400 California schools, to pay a $1.10 million fine and change its practices. In the first CPPA decision to address privacy violations involving students and California schools, the CPPA found that PlayOn Sports used tracking technologies to collect personal information and deliver targeted advertisements to ticket holders, forced Californians to click agree to those tracking technologies before they could use their digital tickets or view the company’s websites, and then improperly directed users to opt out through the Network Advertising Initiative (NAI) and Digital Advertising Alliance (DAA) rather than providing its own lawful opt-out mechanism. In addition to the fine, PlayOn Sports must conduct risk assessments, provide clear and readable privacy disclosures, implement proper opt-out methods, and comply with California’s law prohibiting the sale or sharing of personal information of consumers between 13 and 16 years old without their affirmative opt-in consent. For EdTech, sports media, event ticketing, and school-facing SaaS clients, this decision delivers two critical compliance signals. First, it is a violation of California’s privacy framework to require a consumer to agree to tracking so that they can access or receive the good or service paid for by the consumer. Second, redirecting users to industry opt-out frameworks like NAI and DAA is not a substitute for providing a CCPA-compliant opt-out mechanism.

CPPA Fines Ford $375,000 for Adding Unnecessary Friction to CCPA Opt-Out Process. The CPPA issued a decision requiring Ford Motor Company to pay a $375,703 fine and change its practices after finding that Ford required consumers to complete an email verification step before it would process opt-out requests for the sale and sharing of personal information collected through its digital properties and connected vehicle services. Specifically, the CPPA alleged that Ford would not process opt-out requests at all unless consumers completed the verification. The CPPA’s enforcement theory is that this was an unnecessary step in the opt-out process that worked to discourage consumers from exercising their privacy rights and that friction itself violates the CCPA. In addition to paying the fine, Ford must provide consumers with easy opt-out methods with minimal steps, conduct an audit of tracking technologies on its website, and ensure compliance with opt-out preference signals including the Global Privacy Control. The matter arose from the Enforcement Division’s review of data privacy practices by connected vehicle manufacturers, the same review that produced last year’s enforcement action against American Honda Motor Co., and signals that the CPPA is systematically working through the automotive sector. For connected vehicle manufacturers, auto dealers, and mobility platforms collecting telematics or location data from California consumers, the Ford and Honda precedents together establish that email verification gates and multi-step opt-out processes are per se problematic under California law, regardless of the data security rationale behind them.

California Advances Privacy Whistleblower Bill to Pay Employees Who Report Violations. California Assembly member Pilar Schiavo introduced AB 2021, sponsored by the CPPA, which would provide financial incentives for tech company employees and contractors to report violations of the state’s privacy law directly to the CPPA. The bill would set up a whistleblower bounty program modeled in part on SEC and FTC whistleblower frameworks, creating a new source of enforcement intelligence for the agency and a new category of legal risk for companies whose internal privacy compliance practices do not match their public-facing policies. For companies with California operations or California-resident employee bases, AB 2021 is a significant reason to conduct an internal audit of data handling practices, as the bill would create financial motivation for employees to surface discrepancies between documented privacy programs and actual practices before the CPPA does.

Oklahoma Becomes Twentieth State to Enact Comprehensive Privacy Law. Oklahoma Governor Kevin Stitt signed Senate Bill 546, authored by House Majority Floor Leader Josh West and Senator Brent Howard, making Oklahoma the twentieth state in the nation to enact a comprehensive consumer privacy law. The law, which is effective January 1, 2027, gives Oklahomans rights to access, correct, delete, and obtain copies of their personal data, as well as opt out of the sale of their personal data and certain targeted advertising practices. The law applies to businesses that either process personal data of over 100,000 Oklahoma consumers or process data of 25,000 consumers while earning a majority of revenue from selling data, and requires transparent privacy notices, reasonable data security practices, and consent before processing sensitive personal information with the Oklahoma Attorney General authorized to enforce. Exemptions apply to state agencies, nonprofits, higher education institutions, and organizations working with data already regulated under federal laws including HIPAA. With Oklahoma’s enactment, companies doing business across multiple states should evaluate whether their existing privacy programs, opt-out mechanisms, data processing agreements, and privacy notices now extend to Oklahoma consumers.

Privacy Litigation

Class Action Alleges Meta and Accenture Gave Employees Backdoor Access to Supposedly Encrypted WhatsApp Messages. A class action complaint (Shirazi v. Meta Platforms) filed March 25, 2026, in the Northern District of California alleges that Meta, WhatsApp, and consulting firm Accenture secretly intercepted, read, stored, accessed, and viewed the private and end-to-end encrypted messages of WhatsApp users. The alleged activity directly contradicts WhatsApp’s foundational marketing promise that not even WhatsApp can see users’ messages and Mark Zuckerberg’s sworn Congressional testimony that Facebook systems do not see the content of messages transferred over WhatsApp. The complaint alleges that whistleblowers informed federal investigators at the U.S. Department of Commerce’s Bureau of Industry and Security that Meta employees and Accenture contractors had broad access to the substance of WhatsApp messages that were supposed to be encrypted and inaccessible, with messages viewable simply by scrolling through an internal dashboard, and that similar claims were the subject of a 2024 SEC whistleblower complaint. The complaint asserts twelve counts including the Electronic Communications Privacy Act (statutory damages of $10,000 per violation), the California Invasion of Privacy Act ($5,000 per violation), the Stored Communications Act ($1,000 minimum per person), the Pennsylvania Wiretap Act, the California Constitution, common law intrusion upon seclusion, breach of express and implied contract, the California Unfair Competition Law, and the California False Advertising Law, with the breach of contract theory grounded directly in WhatsApp’s own privacy policy promises that messages are encrypted to protect against us and third parties from reading them. For companies deploying privacy representations in marketing, particularly end-to-end encryption claims, this complaint is a direct warning that the gap between public privacy marketing and actual data access practices is now the central theory of a multi-count federal class action seeking treble damages, punitive damages, disgorgement, and injunctive relief.

Marketing & Consumer Protection

Two back-to-back jury verdicts against social media platforms, a wave of state AI disclosure legislation, and an accelerating FTC junk fee enforcement campaign together mark a significant inflection point in consumer protection law. The common thread is that companies can no longer rely on disclosures alone to manage liability, and the plaintiffs’ bar and regulators are increasingly finding ways to reach conduct that was previously thought to be insulated from suit.

- The New Mexico ($375M) and Los Angeles social media addiction verdicts on consecutive days represent the most significant shift in platform liability since Section 230 was enacted. The defective product framing in the Los Angeles case bypasses Section 230 immunity entirely and may influence the outcome of 2,000 pending lawsuits, making it essential that platform operators, AI companion developers, and any company whose product is algorithmically designed to maximize engagement with young users reassess their product liability exposure now.

- New York, New Jersey, Oregon, Pennsylvania, and Washington are all advancing or enacting legislation that imposes disclosure obligations, private rights of action, and per-violation statutory damages on companies deploying AI in consumer-facing contexts. The collective scope of these bills confirms that a single disclosure that a user is interacting with AI is not sufficient, and that advertising must not represent AI products as substitutes for licensed professionals.

- The FTC’s junk fee enforcement agenda is cross-sector and accelerating. Warning letters to 97 auto dealer groups, the rental housing fee rulemaking, the Xponential Fitness franchise settlement, and the Negative Option Rule inquiry all signal that pricing transparency and subscription cancellation flows will move from warning letters to enforcement actions. Businesses should audit consent, disclosure, and cancellation practices now, and companies running AI voice or outbound telemarketing campaigns across multiple states must keep jurisdiction-specific consent documentation given the circuit split created by the Fifth Circuit’s Bradford ruling on oral consent for prerecorded calls.

Federal

FCC Proposes Sweeping Restrictions on Offshore Call Centers with Major CPNI and Data Security Implications. On March 26, 2026, the FCC voted to issue a Notice of Proposed Rulemaking (NPRM) that would impose significant new restrictions on the use of offshore call centers by telecommunications carriers, VoIP providers, cable operators, and satellite broadcasters and their affiliates. The draft NPRM would mandate English proficiency standards for call takers, cap the percentage of customer service interactions handled offshore, require disclosure when calls are handled abroad, give consumers the right to transfer to U.S.-based representatives, and restrict offshore handling of sensitive consumer data including Social Security numbers, credit card numbers, passwords, MFA codes, and CPNI. The proposal also connects offshore call centers to illegal robocall operations, citing evidence that personnel trained at legitimate foreign customer service operations have used their knowledge to conduct sophisticated scam campaigns targeting Americans. Carriers, MVNOs, and VoIP providers should begin assessing their offshore call center footprints now, as the comment period will open immediately following publication in the Federal Register.

FCC Proposes Expanded Robocall Certification Requirements for All Numbering Resource Recipients. The FCC voted to issue an NPRM that would expand robocall certification and disclosure requirements beyond direct numbering recipients to all service providers that receive numbering resources, including resellers, as part of its continuing campaign to eliminate illegal robocalls at the point of origination. The extension of certification obligations to resellers is particularly significant for MVNO and wholesale providers, who would for the first time face direct FCC certification requirements around their numbering resource usage and robocall mitigation practices, creating new compliance infrastructure obligations on top of existing STIR/SHAKEN and Robocall Mitigation Database requirements.

FTC Warns 97 Auto Dealership Groups About Deceptive Pricing. On March 13, 2026, the FTC sent warning letters to 97 auto dealership groups nationwide, signaling renewed focus on deceptive pricing practices in the retail auto sector. The letter reminded the dealerships that advertised prices must reflect the total price consumers will pay, including all mandatory, dealer-imposed fees, with the only permissible exclusions being government charges like taxes and title fees. The FTC cited specific practices it considers deceptive, including prices that omit required dealer fees, incorporate rebates not available to all consumers, condition the advertised price on dealer financing, or require purchase of add-on products not reflected in the headline price. The agency framed the effort as part of a broader price transparency initiative spanning rental housing, ticketing, hotels, grocery delivery, and now auto sales and leasing. Dealers and auto finance companies should audit pricing disclosures across all advertising channels now, before these warning letters become enforcement actions.

FTC Moves Toward Federal Framework for Rental Housing Fee Transparency. The FTC announced an Advance Notice of Proposed Rulemaking to explore a new rule governing unfair or deceptive rental housing fee practices, with a 30-day public comment window once published in the Federal Register. The initiative targets the widening gap between advertised rent and what renters pay once mandatory fees are layered on, including amenity, technology, administration, and utility-related charges. Notably, the ANPRM seeks input on the role of property management software, listing services, and online platforms in displaying total rent and itemized fees, signaling that technology providers, not just landlords, are squarely in the FTC’s crosshairs. Paired with the simultaneous warning letters to 97 auto dealer groups, the agency’s junk fee enforcement agenda is clearly cross-sector and accelerating.

FTC Launches Healthcare Task Force to Coordinate Enforcement Across Consumer Protection and Competition. FTC Chairman Andrew Ferguson directed the agency’s Bureaus of Competition, Consumer Protection, and Economics, along with the Office of Policy Planning and Office of Technology, to form a Healthcare Task Force designed to take a coordinated, integrated approach to healthcare enforcement and advocacy. The formation signals that healthcare pricing, pharmacy benefit management, and health data practices are priority targets for the current FTC leadership, and for clients operating in telehealth, AI-assisted clinical decision support, or connected health devices, this task force increases the likelihood that data handling, advertising claims, and AI-enabled service representations will face coordinated scrutiny from both the competition and consumer protection sides of the house simultaneously.

FTC Secures Record $17 Million Settlement Against Xponential Fitness for Franchise Rule Violations. The FTC secured a $17 million settlement against Xponential Fitness, Fitness, which is the largest consumer redress ever obtained in an FTC franchise case, resolving allegations that the company systematically deceived prospective franchisees of brands including Club Pilates, Pure Barre, YogaSix, and StretchLab by misrepresenting how long it takes to open studios, failing to disclose required executive litigation and bankruptcy history, and providing inaccurate or incomplete Franchise Disclosure Documents to buyers paying an average initial fee of $45,000 on 10-year agreements. The settlement prohibits Xponential from making misrepresentations to prospective franchisees and requires full compliance with the Franchise Rule going forward. Any business selling franchises or licensing arrangements should audit its FDDs, earnings claims, and executive disclosure obligations now.

FTC Seeks Public Comment on Negative Option Rule Amendments to Address Deceptive Subscription Practices. The FTC issued an Advance Notice of Proposed Rulemaking seeking public comment on whether to amend its Negative Option Rule, which is the rule governing subscription and pre-notification plans, to better address deceptive or unfair negative option practices in today’s marketplace. The inquiry signals that the Commission views the existing rule as potentially inadequate to address modern subscription billing practices, including those deployed by SaaS, streaming, and app-based services that use dark patterns to impede cancellation. Businesses relying on auto-renewal or continuity billing structures should treat this ANPRM as a prompt to audit their consent, cancellation, and disclosure flows before a revised rule narrows the compliance window and consider filing comments.

State

Washington Reduces Commercial Email Spam Damages from $500 to $100 Per Violation, Applies Retroactively. Washington Governor Bob Ferguson signed Engrossed Substitute House Bill 2274, amending Washington’s Commercial Electronic Mail Act to reduce the statutory damages available to recipients of deceptive commercial electronic mail from $500 to $100 per violation (or actual damages, whichever is greater), while retaining the $1,000 per-violation damages available to interactive computer services. The bill also modifies the subject line prohibition, shifting from a rule based on what the subject line contains to what it uses, and expressly applies retroactively to all causes of action commenced on or after the effective date, regardless of when the underlying conduct occurred. The retroactivity provision is a notable legislative choice that will affect pending litigation under the prior $500 damages standard. For businesses engaged in commercial email marketing to Washington residents, the reduction in per-violation recipient damages decreases one category of class action exposure, but the retroactive application means the bill’s effective date, not the date of the underlying conduct, governs which damages standard applies to any newly filed claim.

Litigation

Fifth Circuit Rules Oral Consent Sufficient for Prerecorded Telemarketing Calls. The Fifth Circuit Court of Appeals held in Bradford v. Sovereign Pest Control on February 25, 2026, that oral consent is sufficient for telemarketing calls using prerecorded voices, directly overturning the FCC’s 2013 regulatory requirement for prior express written consent. The Fifth Circuit evaluated the plain meaning of the law and concluded that it only requires prior express consent to send prerecorded calls. The court reasoned that voice and written consent both met the requirements of “prior express consent.” The ruling applies in Texas, Louisiana, and Mississippi, and creates a meaningful circuit split with the Ninth and Eleventh Circuits, where written consent documentation remains essential to defeat TCPA claims. For businesses engaged in outbound telemarketing or operating AI voice campaigns across multiple states, the decision reinforces that venue is now a strategic variable in TCPA litigation and that consent documentation standards must be jurisdiction specific.

New Mexico Jury Orders Meta to Pay $375 Million for Willfully Violating State Consumer Protection Law in Child Safety Case. A New Mexico state court jury held Meta liable for nearly $400 million in civil damages after a seven-week trial in Santa Fe in which the state attorney general accused the Facebook and Instagram operator of not safeguarding children from child predators and of misleading residents about platform safety. The jury awarded the maximum penalty of $5,000 per violation for both misrepresentation of platform safety and unconscionable trade practices, calculated against 37,500 New Mexico users, one quarter of the state’s teen population, yielding $375 million. Jurors considered Meta’s failure to enforce its ban on users under 13, the role of its algorithms in prioritizing sensational or harmful content, and the prevalence of social media content about teen suicide. A second phase of the trial starts May 4, 2026, where a judge will decide whether Meta created a public nuisance and whether the company must fund public programs to address the harms and implement structural changes including effective age verification.

Meta and Google Found Liable as Defective Products in Landmark Los Angeles Social Media Addiction Trial. The day after the New Mexico verdict, a Los Angeles jury found Meta and Google negligent and ordered them to pay $6 million in damages, with Meta on the hook for 70%, in what represents the first time a jury has found that social media apps should be treated as defective products for being engineered to exploit the developing brains of children and teenagers. Internal Meta documents shown to the jury included a memo stating if “we wanna win big with teens, we must bring them in as tweens,” and data showing 11-year-olds were four times as likely to keep returning to Instagram compared with competing apps despite the platform’s minimum age of 13. While the dollar amount is modest compared to the companies’ valuations, the decision is transformative. By framing the case around defective product design rather than platform content, plaintiffs’ counsel bypassed Section 230 immunity and the verdict may influence the outcome of 2,000 other pending lawsuits. For platform operators, AI companion developers, and any company whose product is designed to use an algorithm to maximize engagement with young users, these back-to-back verdicts on consecutive days represent the most significant shift in platform liability exposure since Section 230 was enacted. Both companies have announced appeals.

Of Note

IAPP Global Privacy Summit 2026. AI Governance Is Not a One-Time Event.

This year’s IAPP Global Privacy Summit made one thing unmistakably clear. AI governance has moved from aspirational to operational, and the legal and privacy community is still catching up. Nearly every session touched on AI in some form, from regulatory timelines to enforcement trends to practitioner war stories.

One session that stood out was “In AI We Trust? Governing High-Stakes AI Before Regulators Step In,” a valuable reminder for any organization deploying AI. Governance is not one and done. I few high points from the presentation worth flagging are provided below.

What makes AI “high stakes” is not the label. It is the consequence profile. The presenters reframed the question entirely. An AI system becomes high stakes when it starts shaping treatment, access, pricing, scrutiny, or recourse for real people, especially when outcomes are hard to understand, challenge, or avoid, and when the system can scale quickly. Teams often underestimate this because systems framed as operational tools (refund triage, support routing, fraud detection) even though they are functionally making consequential judgments about people.

The reviewed system and the live system may not be the same. This was one of the most useful points in the session. A new signal enters the model. An existing signal starts carrying more weight. A feature expands to a new population. A triage tool quietly starts shaping substantive outcomes. None of these changes may trigger a governance review. That is precisely the problem. The session called this “drift without reassessment” that is caused by weak or vague reassessment triggers as the most common structural failure.

“Assistive” AI still shapes decisions. When AI output becomes the starting point, the recommended default, or the frame through which the next human reviewer reads a case, it is no longer merely assistive. Repeated acceptance turns recommendation into operating practice. This has real implications for how organizations document human oversight. A human touchpoint is different from meaningful human review. The presenters coined the new phrase “Expert in the Loop,” which is poignant. Not every human understands what the system is doing and is able to provide the oversight needed. In fact, having a core cross-functional team reviewing systems is much better than assigning one or two employees to the “monitoring” role. I would slightly revise the phrase to Experts in the Loop.

The regulatory landscape is already here, in overlapping layers. From state laws (California’s CPPA, Colorado AI Act, Virginia, Washington), to federal frameworks (FTC Act §5, COPPA amendments, employment and civil rights law, ECOA/FCRA for credit decisions), and with international laws in force (EU AI Act). The cross-cutting theme across all of them was documentation, substantiation, meaningful human review, vendor accountability, and change management.

The presentation made clear that companies should watch for four recurring structural gaps, even in mature programs. First, when no one clearly has authority to pause or escalate the model, or diffuse authority. Second, when there is limited visibility into what vendors are doing (e.g., updates and defaults arrive without notice). Third, a one-size-fits-all governance where lower-risk AI gets over-governed and higher-risk AI gets under-reviewed is not enough. A continuous program means revisiting the model at regular intervals and having feedback processes in place to identify and catch the model drift. Finally, organizations should guard against structure drag, meaning a policy that exists on paper but does not connect to a live system.

The session recommend that organizations implement a three-step practical framework. First, companies should map what is live and create an AI system inventory and vendor register. Next, they should figure out what systems need closer review by conducting risk assessments, looking at the consequences of how the system is performing, and create risk-based tiers. Finally, organizations should manage how governance reaches the live system by conducting role-based training, providing clear guidance on acceptable use, and establishing vendor terms with real change-notification rights.

The closing point was simple and worth repeating. Start with one live AI system and pressure-test six things, including owner, tier, last assessment date, change triggers, governing rules, and vendor dependencies. One concrete improvement in one live system teaches more than a perfect framework on paper.

The content of this article is intended to provide a general guide to the subject matter. Specialist advice should be sought about your specific circumstances.

[View Source]