- within Technology topic(s)

- in United States

- with readers working within the Insurance industries

- within Technology, Intellectual Property and Privacy topic(s)

Setting the Scene

The EU AI Act has been in force since August 2024, but the ink was barely dry before the European Commission proposed changes to it. In November 2025, the Commission published the Digital Omnibus proposal — a package of targeted amendments aimed at simplifying and clarifying the AI Act, alongside broader adjustments to several pieces of EU digital legislation, including the GDPR and Data Act. The proposal came as a response to concerns about legal uncertainty and the practical challenges businesses face in preparing for the new digital legislation.

This blog post provides an overview of where the proposed amendments currently stand. The Digital Omnibus negotiations have now entered the trilogue phase, with the Council and the Parliament having confirmed their respective positions and the aim of reaching agreement by the end of April 2026.

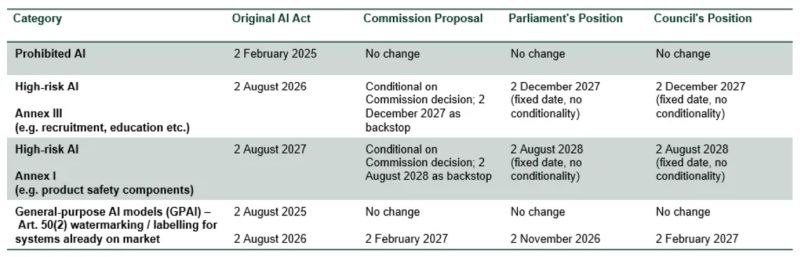

Extended Timeline for High-Risk AI

At the heart of the AI Act is the regulation of high-risk AI, and few aspects of the Digital Omnibus proposal have attracted as much attention as the timeline for applying these rules. At this stage, it appears clear that the deadline will be pushed back by at least a year.

Training AI on Personal Data

The use of personal data in AI training has been a source of uncertainty for businesses. The Digital Omnibus proposal responds directly to this need by introducing targeted GDPR amendments that centre on how personal data may be used to train AI.

The key changes confirm that legitimate interest can serve as a legal basis for processing personal data in AI development and introduce new exceptions for the processing of sensitive data for bias detection and for special category data encountered incidentally in training datasets. The Parliament and Council have both broadly aligned on these points, with certain clarifications proposed.

AI Literacy: A shifting Obligation

The AI Act imposed a broad obligation on all organisations that use or develop AI to ensure sufficient AI literacy of their personnel and other stakeholders. The Digital Omnibus proposes shifting the primary responsibility for AI literacy from organisations to the Commission and EU Member States. The Parliament and European data protection authorities have pushed back against the softer approach, stressing that organisations should not be released from a direct AI literacy obligation, whilst the Council has sided with the Commission.

Regardless of where the trilogue lands, businesses should not treat this as grounds for inaction. In Finland, employers are bound by employment law to ensure staff have the competencies needed as working methods evolve. AI literacy is also a risk management imperative: deploying AI without adequate understanding of its functioning and limitations exposes organisations to significant legal, reputational, and operational liability.

Takeaways for Businesses

The coming months will be decisive. The Digital Omnibus amendments are not a rollback of the AI Act — they represent an adjustment to the timeline and a refinement of certain details. The core regulatory framework remains firmly in place, and businesses should not pause their efforts whilst waiting for the trilogue to conclude.

Businesses should understand where their AI use cases sit within the EU AI Act’s framework. Those deploying AI agents should note that these fall within the existing AI Act, as clarified by the AI Office in April 2026.

It is also important to keep in mind that several obligations are already in force: prohibitions on certain AI practices, obligations for general-purpose AI model providers, and AI literacy requirements.

The content of this article is intended to provide a general guide to the subject matter. Specialist advice should be sought about your specific circumstances.

[View Source]