- within Finance and Banking topic(s)

On 22 April 2024, the Financial Conduct Authority (FCA) and the Bank of England (including the Prudential Regulation Authority (PRA), together the Bank) published updates on their approach to artificial intelligence (AI). The FCA's update is available here and the Bank's update is available here.

Firms should now expect, where they are using AI, that they will need to be able to explain their use of AI to their regulators. This will involve explaining how risks associated with the deployment of AI have been identified, assessed and managed. The acid test is: if the regulator asks, do you have a convincing narrative about your approach to managing the risks associated with AI?

What do you need to do now?

- Understand, and be prepared to explain, how AI is being used at

all levels of your business. This includes suppliers and outsourced

service providers – are they using AI, and do you know about

it?

- Understand how your legal and regulatory obligations interact

with any existing or proposed use of AI in your business.

- Ensure that client and commercial data is protected – you

will need to make sure you understand how your staff are using AI,

and what systems and data their AI tools can access.

- Put in place and maintain robust governance arrangements, and systems and controls, to discharge your legal and regulatory obligations in connection with AI – this might involve, for example, the implementation of an "AI policy".

The government's 5 "AI principles" were outlined in its AI Regulation White Paper (published in March 2023) and its "pro-innovation" response (published in February 2024). The principles (and the government's initial guidance to regulators on implementing them) have been established on a non-statutory basis, at least initially. The UK's approach to the regulation of AI stands in contrast to that of the EU, where a highly prescriptive "AI Regulation" was approved in March 2024.

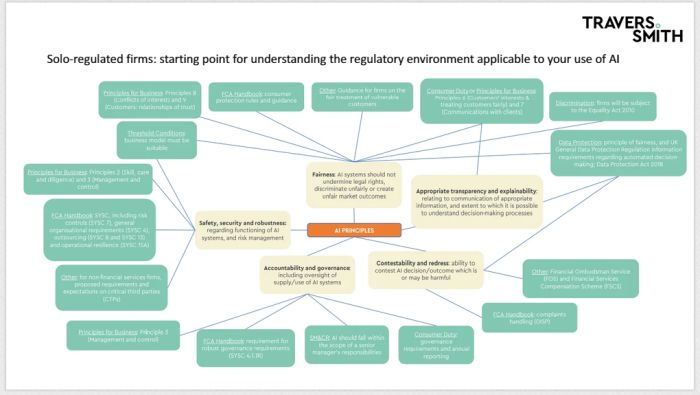

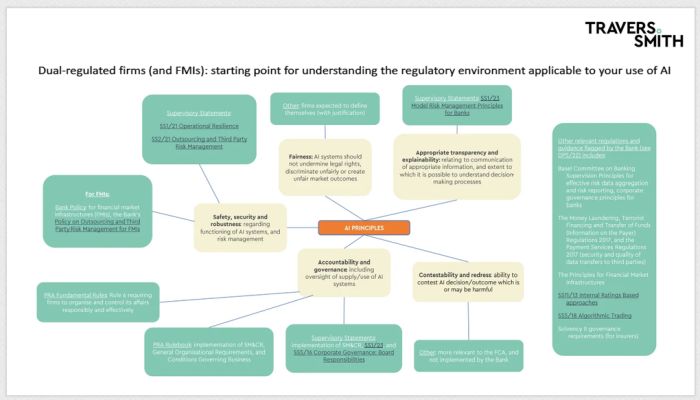

As part of their updates, the FCA and the Bank have summarised, at a high level, the core parts of the existing regulatory framework that they consider map to the government's five "AI principles" – we have summarised this "mapping" in sections 1 and 2 below.

By extension, firms will be able to use the areas of law and regulation identified by the FCA and the Bank as a starting point when assessing how their use of AI interacts with their existing obligations.

The FCA and the Bank's updates follow published correspondence in which the FCA and the Bank (among other regulators, such as the Competition and Markets Authority) were asked to publish an update on their "strategic approach to AI and the steps they are taking in line with the expectations in the White Paper" by the end of April 2024.

Solo-regulated firms: starting point for understanding the regulatory environment applicable to your use of AI

Dual-regulated firms (and FMIs): starting point for understanding the regulatory environment applicable to your use of AI

Insights, themes and observations

The FCA and the Bank updates contain a raft of information – some new, some not. We have identified certain themes and observations, together with potential actions for firms.

The FCA and the Bank are clear that they support the government's approach to the regulation of AI (and that there is no immediate need for any separate "UK AI Regulation", at least for financial services). In the Bank's words, it "believe[s] we have in place a regulatory framework, grounded in our statutory objectives, that will appropriately support the delivery of the benefits that AI/ML can bring, while addressing the risks, in line with the principles set out in the Government's White Paper".

A cynic might say that this is entirely expected: no regulator would want to suggest that they had been caught asleep at the wheel or had been inactive where a risk had already materialised or come close to it. However, in the context of the principles-based approach of UK financial regulation and technology-agnostic supervision, and given the potential dangers of a 'regulate first, ask questions later' approach, it is reasonable to expect that existing frameworks would be appropriate to manage the risks from emerging or developing technologies (like AI), at least at this stage.

Theme 1: use of service providers by firms

- Operational resilience, outsourcing and critical third parties (CTPs) are central to the regulators' analysis of the risks and opportunities arising out of AI. See our separate briefing on the proposed CTPs regime.

Potential actions/questions:

- Do you have the information you need to assess the risks to

your business arising from your use of AI or the use of AI by your

service providers? Do you have a standard list of questions

regarding your service providers' use of AI? (Ask us about ours

if not.)

- Do your policies and procedures for operational resilience,

outsourcing, business continuity, etc. sufficiently deal with the

risks to your business arising from your use of AI or the use of AI

by your service providers?

- Do you know whether your arrangements with service providers

using or providing AI tools constitute outsourcing (and, if they

do, are they critical or important outsourcings)?

- If you are using AI products, like Copilot, have you taken steps to control and limit the information and data those products can access in your organisation? Have you made sure that those products do not have access to inappropriate information or data, including inside information, sensitive personal data, or other confidential information?

- The FCA says that "[t]he adoption of AI may lead to

the emergence of third-party providers of AI services who are

critical to the financial sector. If that were to be the case,

these systemic AI providers could come within the scope of the

proposed regime for CTPs, if they were designated by HM

Treasury". The Bank's update includes similar

statements.

- FCA concerns about "Big Tech", in the context of its competition remit, are not new. On 22 April 2024, the FCA published FS24/1 Potential Competition Impacts from the Data Asymmetry between Big Tech Firms and Firms in Financial Services. The ability of Big Tech firms to use AI to draw insights from their own data or financial services data could, respondents thought, exacerbate existing asymmetries. The development of open finance could feed into such asymmetries, giving Big Tech access to ever larger data sets.

Theme 2: AI cannot be addressed by the regulators (or firms) in isolation

- Both the FCA and the Bank refer to the role of data protection

legislation in meeting the AI principles (specifically the

principles of fairness, appropriate transparency and

explainability, and contestability and redress). It is clear that

there will be a requirement for coordination between sectoral

regulators and this might support calls for an overarching "AI

Authority", as suggested in Lord Holmes' private members' bill on AI

regulation.

- Regulators' work on "Big Tech" and potential data

asymmetries (which would affect the efficacy and impact of AI

tools) cannot be carried out in a silo: the "reach" of

financial services regulators is limited by the regulatory

perimeter (determined by the government).

- Both reports highlight that AI, and its development, form one part of a broader technological landscape. Both reports mention, for example, the potential risks and opportunities that might arise out of quantum computing. AI cannot be considered in, or regulated for, in a vacuum (even if it is a "hot topic" at the moment).

Potential actions/questions:

- Other regulators are also publishing updates on AI – see,

for example, the Competition and Markets

Authority's "strategic update", which overlaps

with the FCA and the Bank papers in some of its consumer-related

concerns – and you will need to take into account the broader

legal and regulatory landscape (not limited solely to financial

regulation) when understanding your obligations in relation to

AI.

- Firms should also be mindful of non-UK legal considerations,

and legislation or regulation which has extra-territorial effect

(e.g. the EU AI Act, and the US Blueprint for an AI Bill of

Rights) – it is critical that firms active in multiple

jurisdictions take a holistic approach to their AI-related

work.

- Consider your use of AI (and other technologies) as a subject which potentially engages a broad range of legal considerations as well as financial services regulation, for example, intellectual property, data protection, employment law...

- In its update, the Bank flags that a broad range of rules, regulations and guidance relevant to mitigating the risks associated with AI (domestically and internationally) was set out in DP5/22 Artificial Intelligence and Machine Learning.

Other observations

- The FCA has discussed and proposed various changes to the

Senior Managers & Certification Regime

(SM&CR) in recent years. In DP5/22 the FCA and

the Bank explicitly sought feedback on whether a new Prescribed

Responsibility for AI should be prescribed to a Senior Manager, to

which the industry response was (largely) "no". The FCA

expects to issue a consultation paper on SM&CR in June 2024

which is part of the wider Edinburgh Reforms agenda (rather than an

AI-specific development), but the consultation might refer to

AI.

- The FCA is exploring changes to its innovation services (which

currently include a Digital Sandbox and a Regulatory Sandbox) to

enable the testing, design, governance and impact of AI

technologies within an "AI Sandbox".

- Firms will need to think carefully about the total universe of

regulatory obligations that are impacted by their use of AI. This

will depend on the extent of AI usage by the firm. Some of these

may not be immediately obvious – for example, for firms

subject to the Investment Firm Prudential Regime, does the use of

AI have an impact on their internal capital and risk

assessment?

- For firms regulated by the FCA, communications with customers about how and where AI is used are a key consideration. For firms with retail customers, the Consumer Duty is key. Firms that do not have retail customers will also need to take care; the FCA highlights the Principles for Business as relevant to transparency and communications. Equally, firms should take care not to fall into the trap of using the AI "buzzword" inappropriately: non-UK regulators have taken action against firms "AI washing" (e.g. see the recent US Securities and Exchange Commission action against investment advisors making false claims about their use of AI).

Potential actions/questions:

- Consider how and when you are communicating with customers about how AI is used in connection with the services you provide to them: do they have enough information (and the right information) to understand how AI is being used and how it impacts them?

How are the regulators using AI?

It is unsurprising that the FCA and the Bank are already using AI. The FCA, for example, is using tools to detect, review and triage potential scam websites, and in-house synthetic data to assess firms' sanctions screening systems. The Bank is using AI "to support and enhance [its] capabilities" including in predictive analytics, and data analysis. The PRA is using a cognitive search tool to identify patterns in unstructured and complex firm management information. Neither report contained any indication of how the regulators' use of AI would be governed or audited.

The FCA and the Bank are considering other uses of AI and have established specialist groups and divisions for this work. In particular, the FCA is clear that, as financial trading strategies evolve, so too must trade surveillance strategies evolve to monitor market dynamics and detect market abuse – especially more complex types of market abuse which are currently difficult to detect (e.g. cross-market manipulation).

What are the regulators working on?

The FCA and the Bank have each provided areas for future work (albeit without any particular timeline attached, in most cases). In particular, both regulators are clear that they are continuing to build their understanding and are continuing to assess and manage AI-related risks. Some key areas of work, in summary, are:

- Cooperation. The FCA and the Bank both commit

to continue cooperation in the future including through producing a

third edition of the joint FCA-Bank "machine learning

survey". The FCA sits on the Digital Regulation Cooperation

Forum (DRCF), which launched its "AI and Digital Hub" on 22 April 2024,

alongside Ofcom, the Information Commissioner's Office and the

Competition and Markets Authority. The Bank has committed to work

with the DRCF on "selected AI/ML projects".

- Engagement. The FCA and the Bank have both

been and will continue to be engaging with relevant external

organisations and stakeholders. This includes international bodies,

especially in light of the next AI Safety Summit (which will

take place in South Korea in May 2024). The FCA, in particular, is

heavily involved in the AI Working Group of the International

Organization of Securities Commissions.

- Innovation. The FCA will continue to provide its Digital Sandbox and Regulatory Sandbox and will be exploring changes to its innovation services which could enable the testing, design, governance and impact of AI technology within an AI Sandbox. The Bank is considering how to establish a technology environment to enable experimentation in innovative technologies.

The content of this article is intended to provide a general guide to the subject matter. Specialist advice should be sought about your specific circumstances.